The central limit theorem (CLT) is the reason sums or averages of measurements appear to be well-described by a Gaussian.

Suppose we have random variables from an distribution with finite variance.

The central limit theorem states that the sample mean approaches a Gaussian distribution with the same mean and variance as the measurements . That is, if the mean and variance of are given by and , respectively, then the sample mean of measurements

where denotes the normal (Gaussian) distribution with mean and variance :

This also means that the variance of the sample mean shrinks as as the sample size grows!

import numpy as np

import matplotlib.pyplot as plt

%matplotlib inlinedef sample(N, sample_size=100000):

X_n = np.random.gumbel(1.5, 3.0, [sample_size, N])

return X_n[:,:].mean(axis=1)

def show_gaussian_fit(sample, bins):

mu = sample.mean()

sigma = sample.std()

a = sample.size * (bins[1] - bins[0])

fig,ax = plt.subplots()

ax.hist(sample, bins);

# show_gaussian_fit(S_N, bins);

ax.plot(bins, a/(np.sqrt(2*np.pi)*sigma) * np.exp(-(bins-mu)**2 / (2*sigma**2)), 'r-')

ax.get_yaxis().set_ticks([]); # turn off y ticks

# Label plot.

ax.set_xlabel('x');

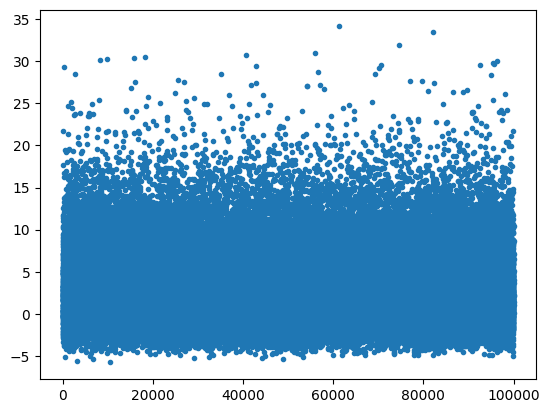

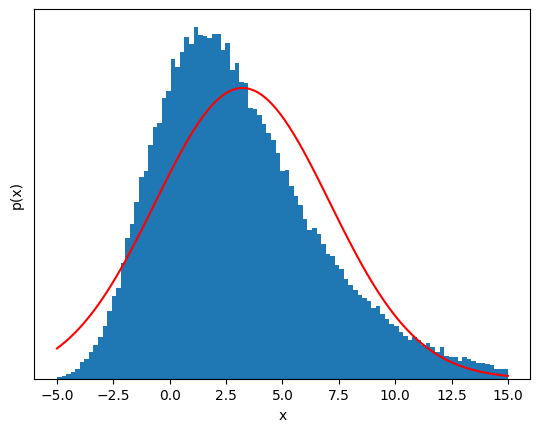

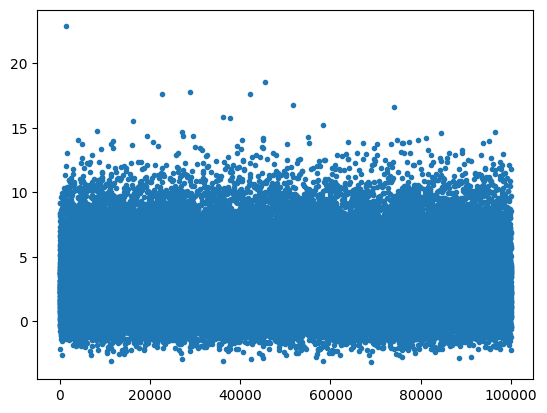

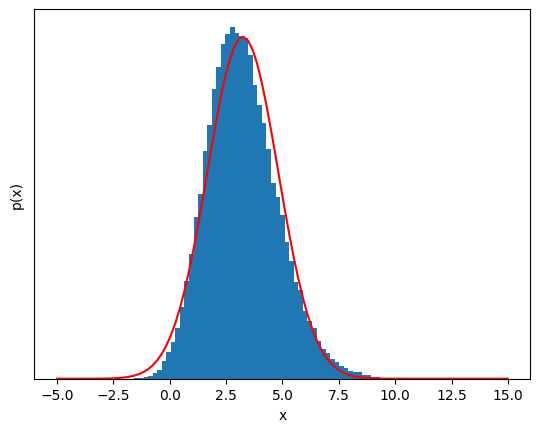

ax.set_ylabel('p(x)');X_n = sample(1)

plt.figure()

plt.plot(X_n,'.')

# Plot the distribution of X_n and the Gaussian fit for comparison

bins = np.linspace(-5, 15, 100);

show_gaussian_fit(X_n, bins);

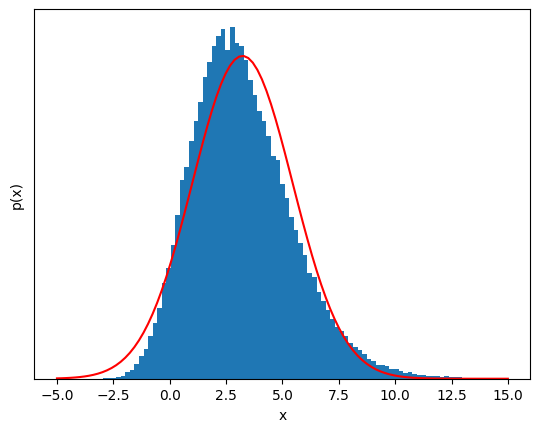

But if we sum a few of these variables, the distribution starts to look Gaussian!¶

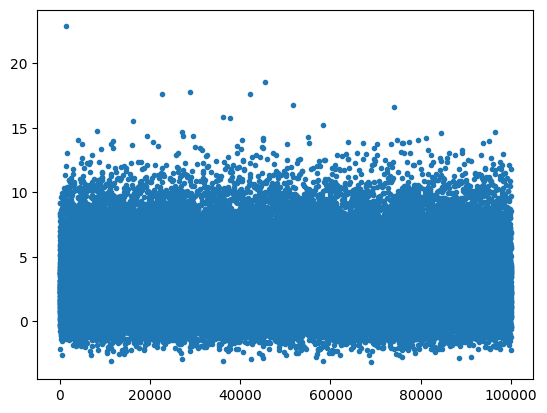

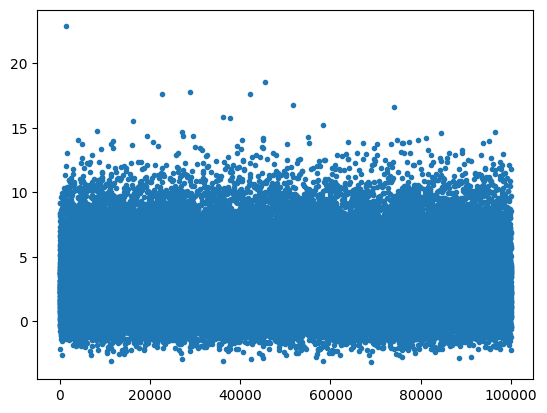

S_n = sample(3)

plt.figure()

plt.plot(S_n,'.')

show_gaussian_fit(sample(3), bins);

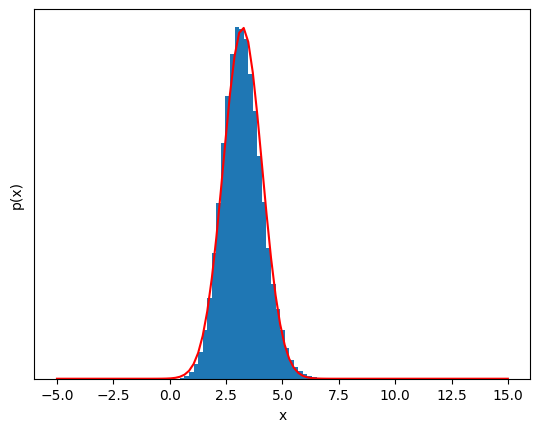

S_N = sample(6);

plt.figure()

plt.plot(S_n,'.')

show_gaussian_fit(S_N, bins);

20 samples, the distribution is almost impossible to distinguish from a Guassian.¶

S_N = sample(20);

plt.figure()

plt.plot(S_n,'.')

show_gaussian_fit(S_N, bins);

This is almost Gaussian!¶

The most amazing thing is that this works for any random variable as long as its variance is finite---it can be extremely non-Gaussian and it will still eventually look Gaussian!

The take-away message is this: If there is some summing or averaging of independent, identically distributed data in your measurement, that new variable will likely be well-described by a Gaussian model.

References and further reading¶

More information about the CLT can be found on Wikipedia: Central limit theorem